[Case 02]

IRIS

Accessibility / Health Tech

IRIS

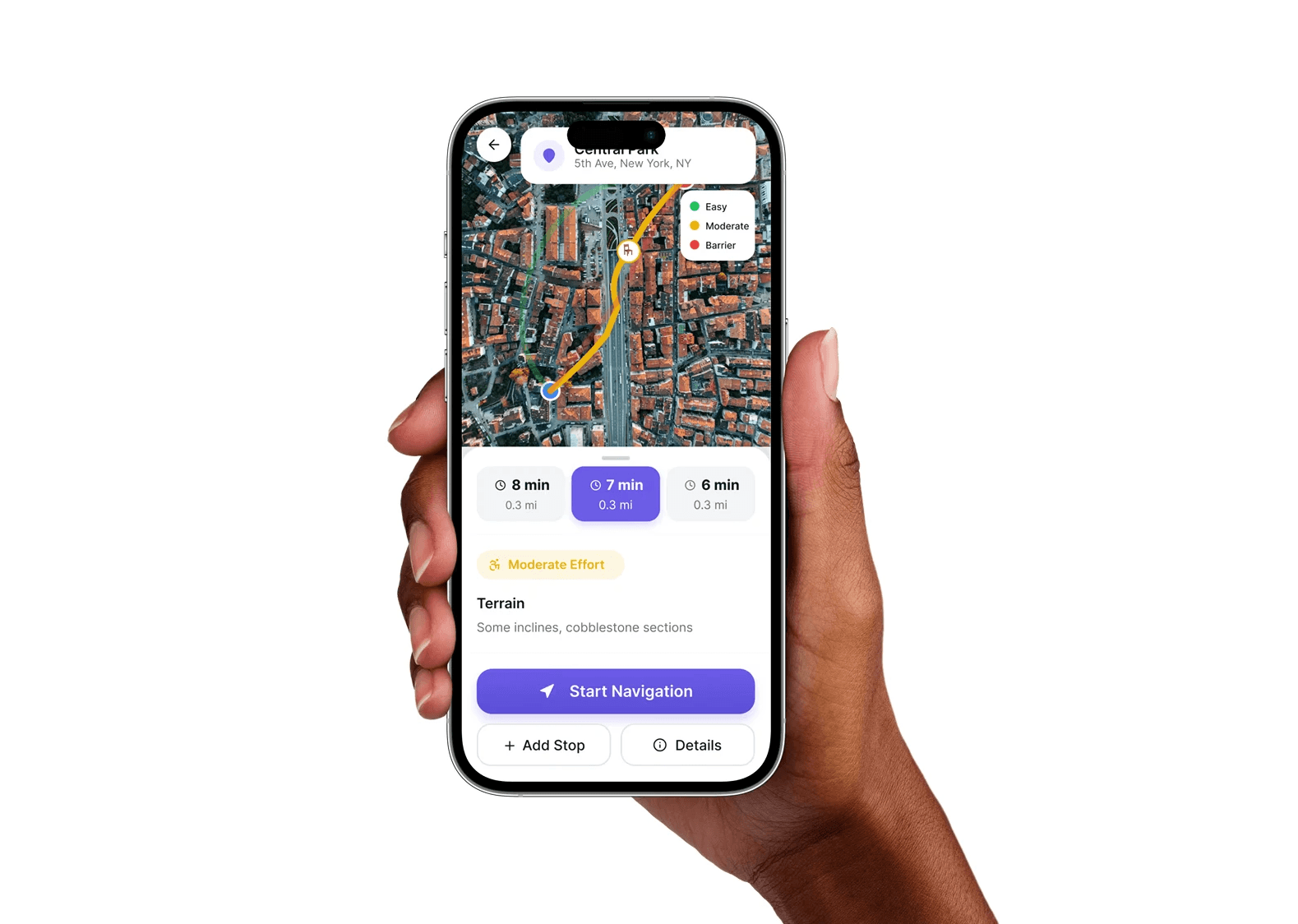

Companion Navigation for an AR Accessibility System

[Project Overview]

An accessibility navigation system that helps users anticipate barriers, physical effort, and sensory strain before walking begins. The companion mobile app supports route planning and safer decision-making in advance.

[Problem Statement]

Accessibility barriers are often discovered only after users encounter them. Existing navigation tools do not account for physical effort, terrain difficulty, or sensory conditions in advance.

[Industry]

Accessibility / Health Tech

[My Role]

UX Designer

[Platforms]

Mobile

[Timeline]

March 2026

[Persona]

Marco Rossi

Retired Mechanic

I just moved to a new city and want to walk independently without pain, confusion, or getting lost.

Age: 68

Location: New York City

Tech Proficiency: Low

Gender: Male

[Goal]

Navigate unfamiliar places safely and independently.

Avoid routes that cause physical strain or confusion.

Maintain independence without relying on others.

[Frustrations]

Unexpected stairs, terrain, or inaccessible entrances

Navigation systems that are difficult to understand

Uncertainty about physical effort and safety

[Process]

[01] User Research

Developed a proto-persona to define key mobility, cognitive, and navigation needs.

Built use-case scenarios and journey flows to map where users encounter uncertainty, strain, and barriers.

Identified core pain points around predictability, independence, and accessible decision-making.

[02] Insights

Accessibility barriers are often only discovered after users encounter them.

Uncertainty is a major source of stress during navigation.

Trust the platform with her payment and personal information.

[03 Design Solution]

Simplified the navigation process into three steps: preview route, adjust preferences, start.

Added features like saved routes, trusted contacts, and emergency support.

Added features like autofill suggestions and real-time error.

[04] Testing & Iteration

Tested the concept across walking, medical, and errand use cases.

Reviewed edge cases including false signals, battery failure, and trusted contact misuse.

Refined the system with live data priority, fail-safe routes, and view-only trusted access.

[Outcome]

Enabled users to anticipate accessibility conditions before movement.

Reduced uncertainty and cognitive load during navigation.

Improved perceived independence, confidence, and safety.

[Key Learnings]

Clarity reduces stress

Users need to understand conditions before they act.

Accessibility must be visible

Barriers matter most when users cannot anticipate them.

Systems need clear roles

Planning and real-time guidance should do different jobs.